P2 - Data Visualization

Data Visualization is a really interesting field of play as it gives you the possibility to find patterns in data in a visual way. It can help make complex phenomena easily understandable for people not familiar with the matter. Processing for example has many uses in the field of data visualization and there even is a book on Data Visualization in Unity by one of its inventors. With the rise of Data Science and Data Journalism there also emerged more specialized languages for this like “R”.

Now Unity is by no means optimized to be a Data Visualization tool. But! It’s still is the very flexible Swiss Army knife we have been working with all along. Thus we sure enough can use it for data visualization and we actually can create something more engaging than simple graphs. As we can deploy functioning applications, we can create complex interactive tools to explore data.

While I was working on the book in the beginning of 2020 the “Coronavirus” and “Covid-19” struck and also sparked a lot of data visualizations about it. So I started to dive into this as well. What I found also very interesting was how the view on the Coronavirus changed with time. How in Germany - where I live - we went from “The risk is very low” to almost complete “lockdown”. So I thought I need to find a way to vadd this layer as well and not just the data.

During this chapter we will discuss some of the basic ideas of approaching this. This will be by far the most complex chapter and we will even look at some work done in a different programming language (Don’t worry, you’ll be fine!). We will not how ever go through everything in fine detail, instead we will look over some of the basic ideas and you can grab the whole project later to go through it yourself.

As quite a few of the scripts are rather long, I will take the freedom to pick out the interesting parts of the scripts and not include every line. But the complete scripts will be supplied as well.

The Project

Before we start talking about the details, let’s discuss the overall aim and scope of the project.

As you can see we will have three major areas in our application. We have globe in the upper left, which will display a dot for each country that has confirmed infections. Size and color is based on the number of infections. We can zoom into the planet to be able to select countries in areas with higher country density, like Europe. We can also rotate around the globe and select the dots on the planet. Selecting a country will update the the graph in the bottom area.

The bottom area serves two purposes. On the one hand it simply graphs the currently selected country or countries, depending on the selected option on the left side. But it also is an interactive timeline for the whole project. Dragging on the slider will update the data on the globe as well as on the News Feed in the upper right.

The news feed displays news published by several news sources which we will scrape form the internet. Here you could for example add your own local news paper or just any source you would happen to find interesting.

We will build this in a way to make it reusable later. You could take this and reuse it for a completely different topic, if you have data that makes sense mapping to something like this. So let’s dive in.

Gathering Data

Covid Data

Well, before you can dive into data visualization you need to have data you can visualize. Gathering this data can sometimes be easy as it is presented to you in almost perfect condition. In the case of the Covid Data this is luckily the case. The Center for Systems Science and Engineering (CSSE) at Johns Hopkins University offers their dataset as repository on Github. You probably know their Covid-19 Dashboard. Opening their data for academic and educational research really is a grand gesture. So: A shoutout to them for their great work!

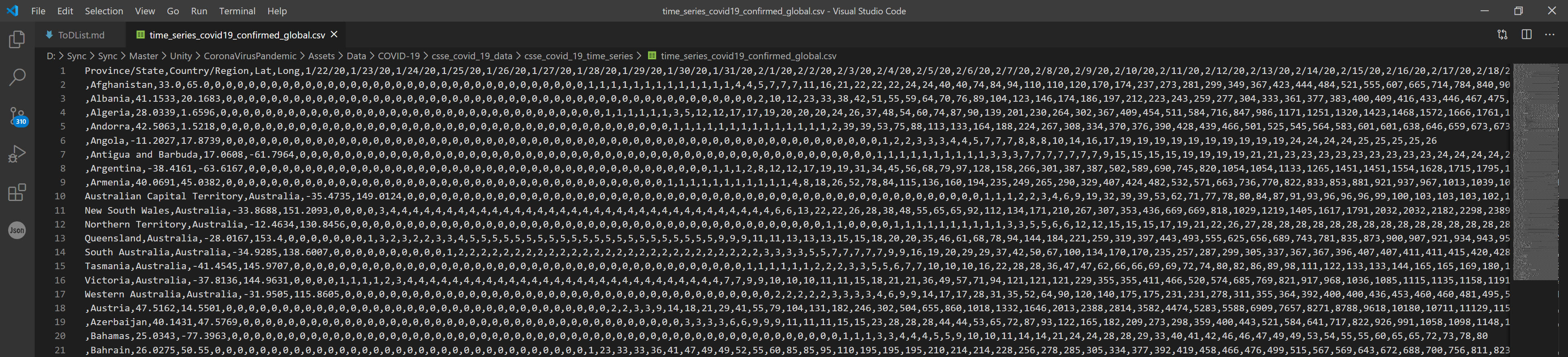

If you take a look at their repository you will find a folder called “csse_covid_19_data” and inside that a “csse_covid_19_time_series” folder. Here we can find a “csv” files. Csv means comma separated value and is something like a an Excel sheet without the use of excel. All values are separated by commas, as the name suggests. You can also find csv files which separate by semicolon, which is nice if you have floating values inside your data set.

CSV file in Visual Studio Code

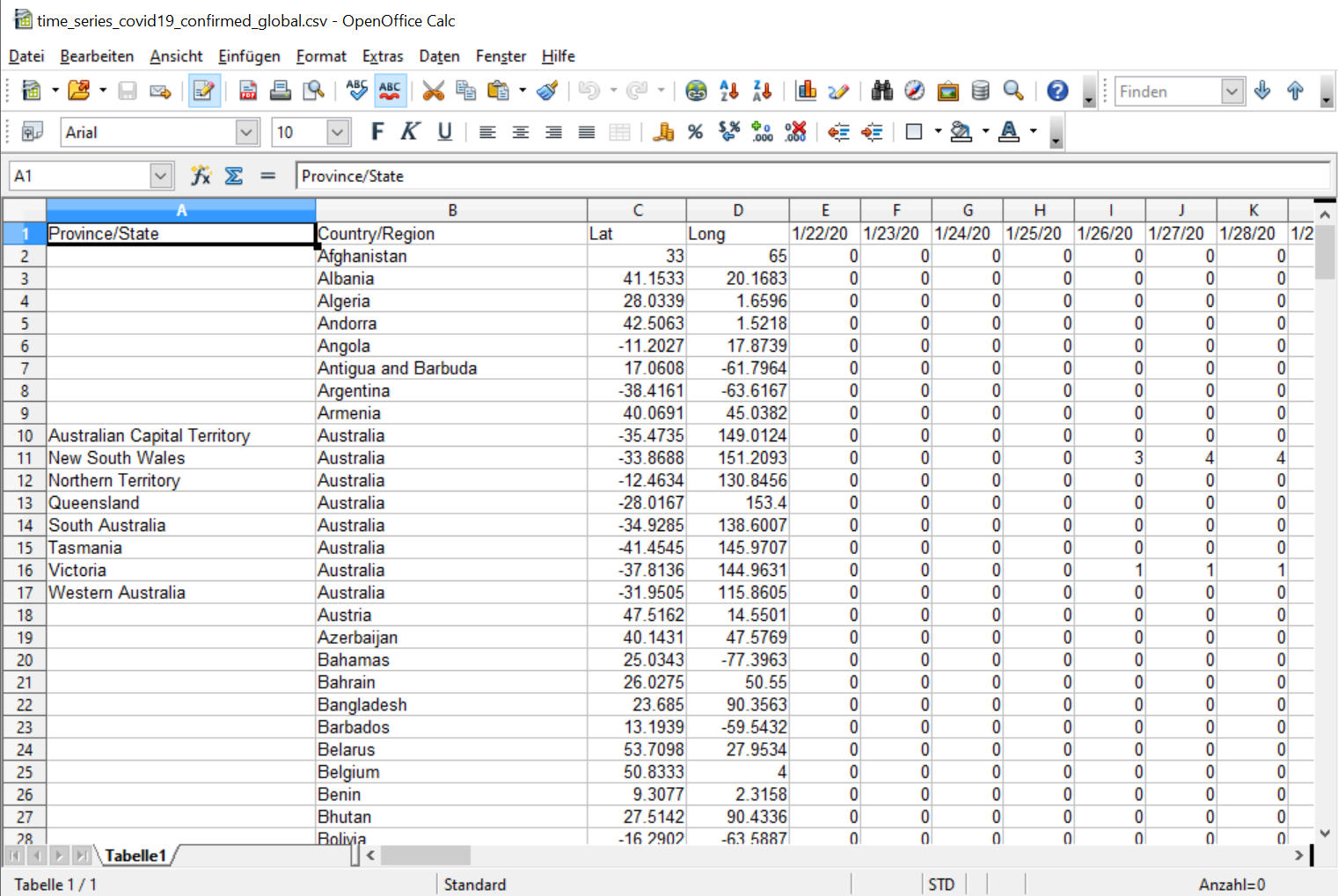

You can download one of those files and open them up in any spreadsheet software. I used Open Office 4. When opening it will show you a dialog to select some opening options, like selecting the separator.

The same file in Open Office

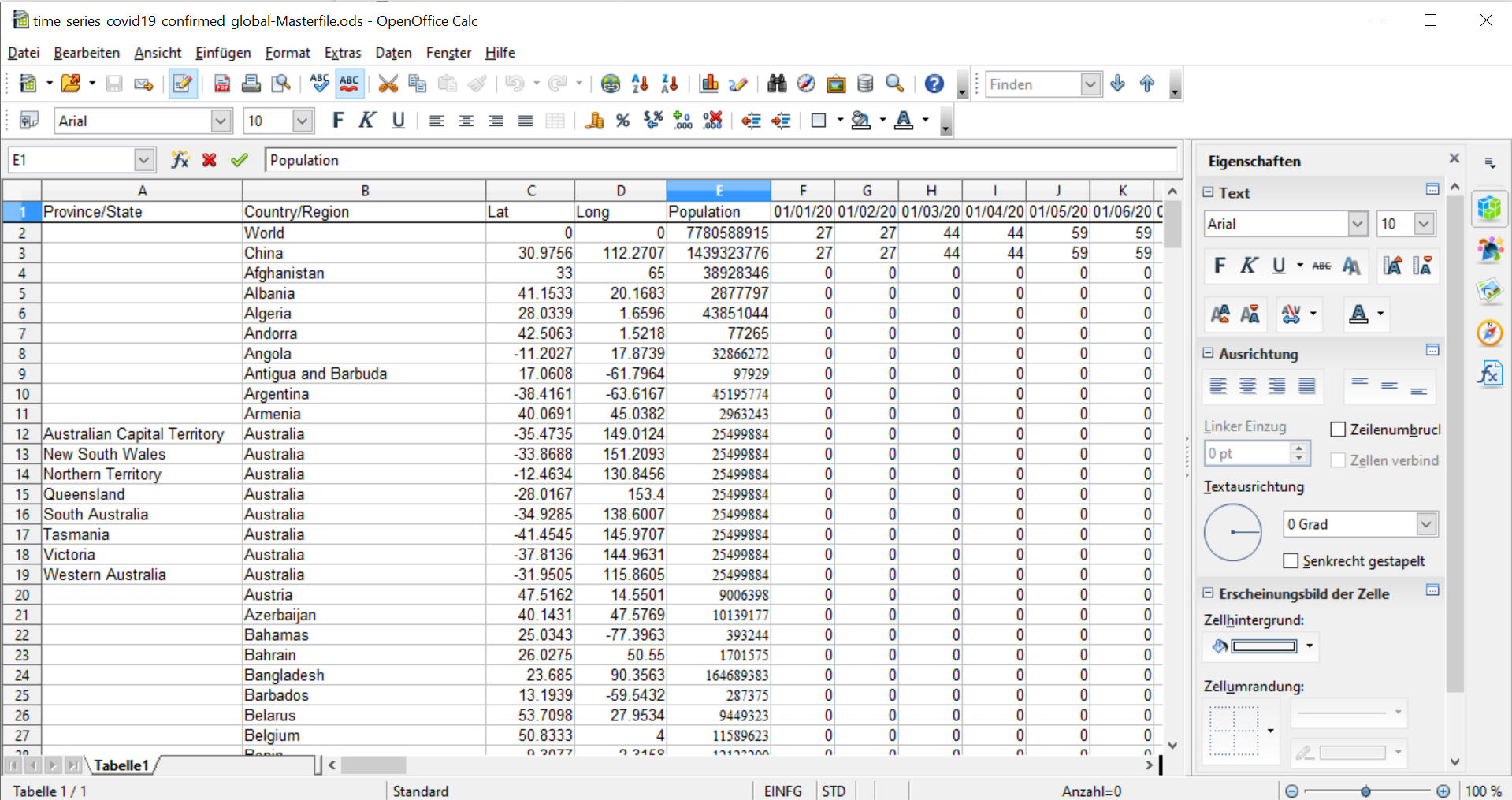

Once open you will see, this is just a simple spreadsheet. We have the countries spread over the rows all the data that belongs to them in the columns. We have columns for the country name and state – if applicable – as well as their location along longitude and latitude. I then went ahead and did some data wrangling myself. I created a column for the the combined world data. You can combine this fast and easily inside your spreadsheet, you don’t need to write code for this later. Do whatever is fastest. Go for flexibility if you really need it. I also combined the cases for chinese states into single additional row.

I later manually added an additional column for population and tried to find some data for the days before the 22nd of January. That data is not as reliable, but it gives some insights into the beginnig of the outbreak as well as some context to early media coverage.

Adding custom data

If you want to add your own data, I suggest you save the file in a spreadsheet format, work in it and only later export to CSV again. But we will need the CSV file format to read the data in Unity. You can also just go ahead an grab the the files for confirmed cases global and deaths global. They work just fine. (That is on 2020-05-02).

News Data

Gathering the news data is a whole different beast. This data is not just up for grabs in a convenient pre-formatted file. We will actually have to go out and gather it ourselves. But we are lazy… So we are not going to do this manually, we will automate it.

We probably could do this using C#. But we won’t. For two reasons.

-

In python it’s very convenient to do so

-

I want you to look at different code in a different language

I want you to look at other code, because I want you to loose your fear of it. Most of the languages out there really share some common ideas and being able to switch between them as need arises is a great skill. You will also be able to adapt your code between languages. Most of the stuff can be done in either language. But there are tools, that are only available in one language - like beautiful soup.

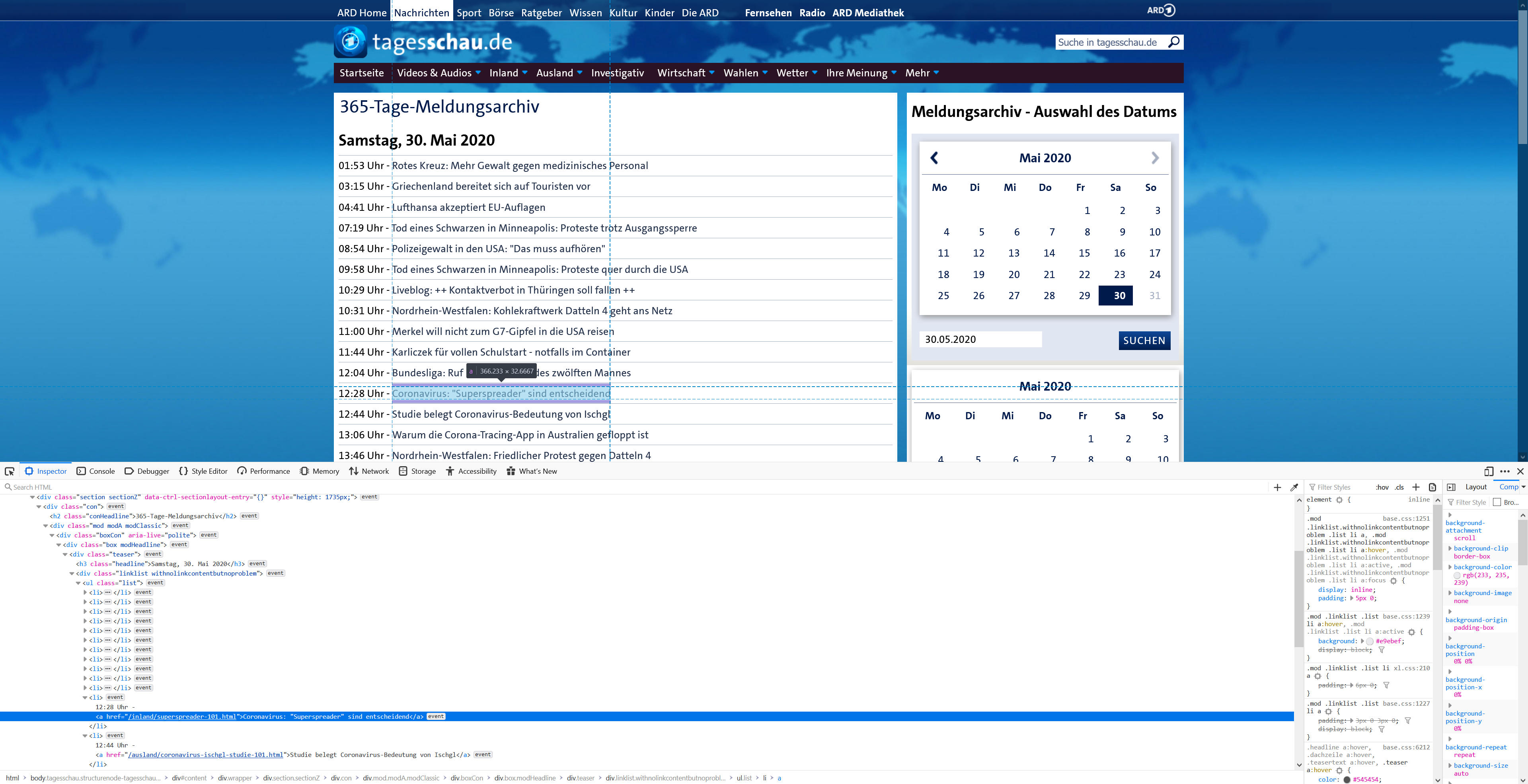

Beautiful soup is a python library that can go through HTML files and find Elements based on tags, classes and ids and then return these. Thus we can go through a list of news articles grab their title, their date and a link to them. We will look at one of the easier sites to scrape. The news site of german public TV station ARD: tagesschau.de.

Archive of the tagesschau

The very nice thing about the “tagesschau” site is, they offer a “Meldungsarchiv”, an archive sorted by day. This makes it rather easy to go through. Thus we can easily just search for the items containing the title and the corresponding link. To do so, we open the Browser Debug Tools (F12 on Firefox) and check the inspector. Using the “pick element” tool (Ctrl + Shift +C) we can just select a headline. This will show us at which point in the code we can find the elements. As you can see here, we will later look for an <a> Element inside an <li> element. But we will also need to narrow down the selection first by finding the “teaser” section, otherwise we would collect grab all links inside a list.

Preparations

Before we can start looking at this, we need to have python installed. You can grab it at

python.org. Go for the python 3 Version, as 2.7 is there for legacy reasons. Once you have python installed you can open a command panel or terminal and type in python -V.

python -VThis should return the currently installed version and assure you the installation went smoothly. We also need some libraries to get things working. We will install these using pip - the “package installer for python”.

Be careful!!

This book is assuming you are a novice programmer and we will install these libraries globally. If you or someone else uses your computer for python coding already, consider creating a virtual environment to work in.

We will need beautiful soup, requests and json.

pip install bs4 requests jsonOnce this is done we can start coding. As in C# we will start with importing our libraries. But instead of using the “using” statement python uses “import”.

from bs4 import BeautifulSoup

import requests

import json

import time

from datetime import date

from datetime import timedelta

import datetimeSo next up is actually finding a way to access the website.

page = requests.get("https://www.tagesschau.de/archiv/" + CrawlDate +".html")This is done via requests. Requests allow you to retrieve a website. Most of this should look familiar to you. We are calling method from the requests library and passing it a string and assigning it to a variable.

But we never initialized the variable!! Well, actually… we just did. In python you don’t need to add the data type in front of a variable to initialize it. It will just take whatever you supply. This is similar to the var keyword in C#. But! Variables in python are also mutable!

Now you see this ominous variable CrawlDate. The actuall page URLs look like this:

https://www.tagesschau.de/archiv/meldungsarchiv100~_date-20200501.html

The 20200501 is just the date. 2020/05/01. Thus we need to construct the date ourselves for each day we want to grab.

delta = timedelta(days=daysIntoPast)

today = date.today() - delta

day = today.day

month = today.month

year = today.year

CrawlDate = "meldungsarchiv100~_date-" + str(year) + str(month).zfill(2) + str(day).zfill(2)A lot of this is also nothing new, it’s just written slightly differently in python. We create datetime objects and then later convert them to a string. The .zfill() property tells the string to add a leading 0 if needed.

Now, with the the link to the site created and the page requested, lets find those elements. We first need to create a soup object from the request response:

soup = BeautifulSoup(page.content, "lxml")You can also print the response to the console if it helps you debugging. Here the print() function is similar to our Debug.Log.

print(soup)As we discussed earlier, all of our headlines are contained inside a <div> with the class “teaser” attached. So let’s find and print them to the console.

teasers = soup.find("div", {"class" : "teaser"})

print(teasers)What we get is still a soup object, just narrowed down by our selection parameters. So we can keep searching. Let’s grab all those list elements next.

articles = soup.find_all("li")Lastly we need to grab a bunch of data from all those articles, like the title and the link. To do so we will create a for loop. Which looks more like a C# for each loop.

for article in articles:

dict = {}

link = article.find("a")

linkstring = link.get('href')

title = article.find('a').text

title = title.lower()

if "corona" in title or "corona" in linkstring:

dict["headline"] = article.find('a').text

dict["source"] = source

dict["Link"] = link.get('href')

NewsEntry.append(dict)

if "covid" in title or "covid" in linkstring:

dict["headline"] = article.find('a').text

dict["source"] = source

dict["Link"] = link.get('href')As you can see, we also have some if-statements here. Using these we filter out all the articles and links that refer to “Corona” or “Covid”.

And finally we will need to save all this. We use the JSON format for that as we can access that easily in Unity at runtime. To repeat all of this for each day, we want to scrape we will put all of it inside a simple while loop and our final script looks something like this:

tagesschauScraper.py

To actually run this script you can just open the folder in which your script is saved in a Console or Terminal and call:

python scriptname.pyAnd we are still not done. You just created a bunch of json files - one file per day. But in Unity I would prefer us to simply work with a single file. To combine these, we use this script:

DayCombiner.py

You will need some time to digest all this. Go through it line by line to understand what is happening. It’s not all that hard. If you don’t understand things, use a search engine to find out more. But this will really increase you understanding of how code in general works.

Once you understand this, try to adapt it for another site. For more complex to scrape sites “Selenium” and it’s python library are great tools.

Loading the data

Data Reader

Now that we have a Newsfeed and the Covid Data from the CSSE at JHU we can start by bringing the data into Unity. So let’s take a look at our first script. The Data Reader will read in all the CSVs, which will get us Arrays of strings. We will need to split them up and convert all the numbers to integer or float values. Lastly we will hand this over to “DataBlockManager”.

DataReader.cs

The first interesting thing is the [ExecuteInEditMode] atop our class. This will allow us to run this script from the editor. This is necessary as all these steps we are about to do will actually take some time, and thus we would never want to do this at runtime. We can rerun this from the editor whenever we updated our data.

We start our class with a lot of global variables. We really don’t care about them being global as they are not relevant at runtime anyways and thus there is nothing that could go all wrong having all of these global.

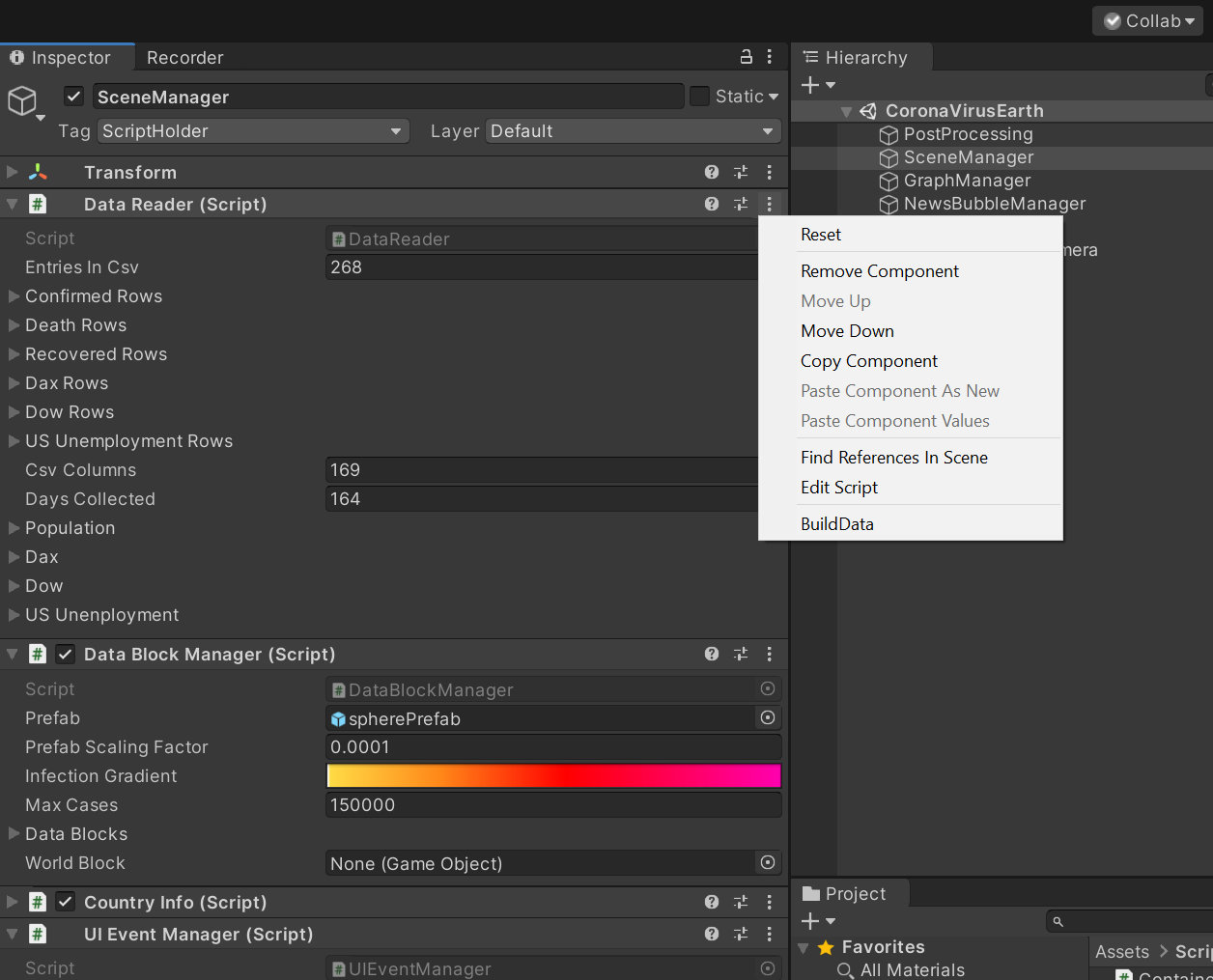

Our first method is buildData() and this is our entry point, as we can not use Start() or Awake(). Adding `[ContextMenu(“BuildData”)] will add this to the tiny dot menu in the Inspector.

Menu Entry

This one will pretty much just call a bunch of other Methods and initialize some of the Arrays. But let’s head straight into the first method we call: readCSVFile(). (Shortened)

csvConfirmed = Resources.Load<TextAsset>("200426_time_series_covid19_confirmed_global");

ConfirmedRows = csvConfirmed.text.Split(new char[] {'\n'});

Resources.UnloadAsset(csvConfirmed);First we read in a CSV File as a TextAsset. For this to work the files must reside in a folder named Resources inside your Assets Folder. csvConfirmed now contains one extremely long string. So next we split it up using text.Split(). We pass an char array as Split point. This \n represents a line break. Thus we now have an entry in the ConfirmedRows arrays for each line in the CSV. And if you remember, in the CSV each row represents a country.

The next method we call is evaluateCSVRange(). Here all want to find out is how many columns our CSV file contains. Thus we split the first entry in the ConfirmedRows array by ,. This is where the “comma seperated value” comes from. We also define how many days we collected. We just subtract 5 from the number of csvColumns as the first 5 Columns contains string data. Statename, Countryname, Latitude, Longitude and Population.

Now that we know how many rows and columns our data contains we can initialize Arrays that can actually hold this data. This is done inside InitializeMultiArrays().

Once those arrays are initialized we can start splitting up our data.

private void SplitMultiArrays()

{

confirmedString = new string[ConfirmedRows.Length, csvColumns];

DeathString = new string[DeathRows.Length, csvColumns];

for (int i = 0; i < ConfirmedRows.Length; i++)

{

for (int j = 0; j < csvColumns; j++)

{

confirmedString[i, j] = Regex.Split(ConfirmedRows[i], ",(?=(?:[^\"]*\"[^\"]*\")*[^\"]*$)")[j];

DeathString[i, j] = Regex.Split(DeathRows[i], ",(?=(?:[^\"]*\"[^\"]*\")*[^\"]*$)")[j];

}

}

}To split these up we will use Regex expressions. We can’t just do this using split by comma, as we have entries in our Array which contain commas themselves but are encapsulated in Quotes. E.g “Korea, South”. In other regards this just the same we did earlier for the first column to get the amount of columns.

Next up is the sorting of this data into new simple int[] arrays.

private void SortCSVs()

{

for (int i = 1; i < confirmedString.GetLength(0); i++)

{

for (int j = 0; j < csvColumns; j++)

{

if (j < 2)

{

CountryNames[i-1,j] = confirmedString[i, j];

}else if (j == 2)

{

LatLong[i-1, 0] = (float) Convert.ToDouble(confirmedString[i, j]);

}else if(j == 3)

{

LatLong[i-1, 1] = (float) Convert.ToDouble(confirmedString[i, j]);

}else if(j == 4)

{

if (confirmedString[i, j] != null)

{

Population[i] = (int)Convert.ToDouble(confirmedString[i, j]);

}

else

{

Population[i] = 0;

}

}

else

{

ConfirmedCases[i-1, j-5] = Convert.ToInt32(confirmedString[i, j]);

Death[i - 1, j-5] = Convert.ToInt32(DeathString[i, j]);

}

}

}

}Here we simply check in which row and column a certain entry is and then decide to which Array to add it. We also finally convert the string data into integer data. As we can’t convert directly to integers, we have to convert to Doubles first. This is a rather lengthy process. As we have about 270 country entries - some contries are split into states - and well a lot of days. All of this script is quite a bit of data crunching.

Finally we create a Data Block Manager and call the createDataBlocks() method.

DataBlockManager

The Datablock Manager is something in between the Editor World and Execution at runtime. This architecturally bad. Hopefully noone will ever read these lines as I got around to fixing them. If you still read this, this is serves as an example of what happens while working.

DataBlockManager.cs

The Datablock Manager creates the spheres which are placed on the Globe. Thus the createContainers() method instantiates a prefab sphere and adds the ContainerData Compontent to it. The ContainerData component holds the data like country name and confirmed cases. But we actually have to copy these over from our arrays in the dataReader.

We also parent these spheres to the globe, as we want them to move along. Finally, we add them to a list of data blocks. We need to do this to later be able to scale all of them on UI interaction.

private void createContainers()

{

GameObject currentCountry;

ContainerData currentContainerData;

//i = 1 to skip description line

for (int i = 1; i < _dataReader.confirmedString.GetLength(0); i++)

{

currentCountry = Instantiate(prefab, new Vector3(0, 0, 0), Quaternion.identity);

currentContainerData = currentCountry.AddComponent<ContainerData>();

currentContainerData.initializeArrays(_dataReader.ConfirmedCases.GetLength(1),

_dataReader.Death.GetLength(1), _dataReader.ConfirmedCases.GetLength(1));

currentContainerData.countryName = _dataReader.CountryNames[i - 1, 1];

currentContainerData.stateName = _dataReader.CountryNames[i - 1, 0];

currentContainerData.latitude = _dataReader.LatLong[i - 1, 0];

currentContainerData.longitude = _dataReader.LatLong[i - 1, 1];

currentContainerData.population = _dataReader.Population[i];

for (int j = 0; j < _dataReader.ConfirmedCases.GetLength(1); j++)

{

//TODO Clean this shit

currentContainerData.confirmedCases[j] = _dataReader.ConfirmedCases[i - 1, j];

currentContainerData.deaths[j] = _dataReader.Death[i - 1, j];

}

currentCountry.transform.localScale = Vector3.one * 3;

currentCountry.transform.parent = globe.transform;

dataBlocks.Add(currentCountry);

}

}With this the Unity Editor part of the DataManager is done. We can now look at the Runtime parts. In the Start() method we subscribe to two events: UIEventManager.OnDayChange and DragToRotate.OnZoomChange. These are fired if either the day on the timeline changes or the user zooms into or out of the globe. We need to grab these events as we need to update the scale of the spheres as soon as this happens.

private void UpdateDay(int day)

{

this.day = day;

for (int i = 0; i < dataBlocks.Count; i++)

{

int cases = dataBlocks[i].GetComponent<ContainerData>().confirmedCases[day];

if (cases > 0)

{

Color properColor = lookUpColor(cases);

dataBlocks[i].GetComponentInChildren<Renderer>().material.color = properColor;

dataBlocks[i].transform.localScale = (prefabScalingFactor * Mathf.Log(cases)) * Vector3.one * HelperMethods.Remap(earthDistance,500,2000, .25f, 1);

}

else

{

dataBlocks[i].transform.localScale = Vector3.zero;

}

if (dataBlocks[i].GetComponent<ContainerData>().countryName =="China" && !(dataBlocks[i].GetComponent<ContainerData>().stateName == ""))

{

dataBlocks[i].transform.localScale = Vector3.zero;

}

}

}UIEvent Manager

As I just mentioned the UIEventManager let us take a look at it.

UIEventManager.cs

The first ChangeDay() method ishooked up to the UI Slider at the bottom of the scene. As soon as the slider is moved and the values is changed it receives the current day on the timeline. The UIEventManager will then fire an event and broadcast the day. Any object in the scene that is reliant on the current day, like the scale of the dataBlocks on the globe as well as the newsfeed can just subscribe to the event and grab the date and update their own data.

The second ChangeDay() method is just an override of this method. It is updated as soon as earth did a full rotation. This will allow the whole thing to more or less run in Auto-pilot.

CountryInfo

CountryInfo.cs

On of the scripts attached to the UIEvent is the CountryInfo component. As soon as the event is recieved it will update the written statistics on the Graph for the last selected country. This will happen in UpdateText()

private void UpdateText()

{

try

{

CountryInfoText.GetComponent<Text>().text = "<b>" + currentContainerData.countryName + "</b>\n" +

"Confirmed Cases: " + currentContainerData.confirmedCases[day] + "\n" +

"Death: " + currentContainerData.deaths[day] + "\n" +

"Recovered: " + currentContainerData.recovered[day];

}

catch (Exception e)

{

CountryInfoText.GetComponent<Text>().text = "";

Debug.Log("No Country selected!");

throw;

}

}Here we also catch an error should there ever be no country selected. Normally this should not happen as we set a starting country, but well. Who knows.

Container Interaction

We also need to update the Graph. Yet not OnDayChange but when we actually select a different country on the globe. This selection process is done inside the ContainerInteraction script. Here we simply fire a ray and check for the collision object.

private void SelectDataContainer()

{

Ray ray = Camera.main.ScreenPointToRay(Input.mousePosition);

RaycastHit hit;

if (Physics.Raycast(ray, out hit))

{

if (hit.transform.gameObject.GetComponent<ContainerData>() != null)

{

GraphManager.setCurrentContainer(hit.transform.gameObject.GetComponent<ContainerData>());

}

}

}As you can see we pass the selected datablock to the GraphManager.

GraphManager

The GraphManager takes care of what kind of graph to actually display as we offer a bunch of different options.

GraphManager.cs

To take care of checking what we are going to graph we use an enum.

enum GraphType{Single, Multi, newCases, MultiPer100K, Dax, Dow, Unemployment,}Enums are a great way to create readable switches. The line above simply declares the Type of enum we want to build as well as all its states. We then also have to declare a variabe of that type and assign it a value

GraphType myGraphType;

myGraphType = GraphType.Single;With this set up we can the build the proper switch statement.

private void SelectTypeOfGraphToDisplay()

{

switch (myGraphType)

{

case GraphType.Single:

GraphConfirmedCases();

break;

case GraphType.Multi:

if (ContainerDataSets.Count > 0)

{

GraphContrySelection();

}

break;

case GraphType.MultiPer100K:

GraphPer();

break;

case GraphType.Dax:

GraphDax();

break;

case GraphType.Dow:

break;

case GraphType.newCases:

GraphNewCases();

break;

case GraphType.Unemployment:

break;

Default:

GraphConfirmedCases();

break;

}

}The default method we call is GraphConfirmedCases(). This one will graph out confirmed cases and death for the given country.

For each graph we first need to determine a maxValue to properly graph it along the Y-Axis. To do so we grab the last entry in the confirmedCases array. We the create a Vector3[] with positions of the Graph. To do so we pass our data into a helper method called graphCalculator. The GraphCalculator remaps the values to match the proper positions in the application.

We then pass the returned values as a list to the Grapher.

Grapher

The Grapher is responsible to actually draw the Graph. It manages a bunch of LineRenderer-Components. These take in the Vector3 arrays and will be colored according to their position in the positions array.

public void drawGraphs(List<Vector3[]> positions, float lineWidth)

{

ResetGraphs();

int itemsInList = positions.Count;

int entries = positions.ElementAt(0).Length;

for (int i = 0; i < itemsInList; i++)

{

graphs[i].positionCount = entries;

graphs[i].startWidth = lineWidth;

graphs[i].endWidth = lineWidth;

graphs[i].SetPositions(positions.ElementAt(i));

}

}The News Feed

The last big element we need to discuss is the NewsFeedManager. It is resposible for filling the Newsfeed in the upper right of our application. To do this it relies on two helper classes. The NewsfeedObject and the NewsFeedReader.

NewsFeedManager.cs

NewsFeedObject

The NewsFeedObject is a rather interesting construction. It does not inherit from Monobehaviour like most of our classes. This is important as the rest of the class resemples the structure of our json file. This is why we have classes defined inside a class. The data read from the NewsFeedReader can then be handed over into this NewsFeedObject.

[System.Serializable]

public class NewsFeedObject

{

public string country;

[System.Serializable]

public class NewsEntries

{

public int day;

[System.Serializable]

public class NewsEntry

{

public string headline;

public string source;

public string link;

}

public NewsEntry[] newsEntry;

}

public NewsEntries[] newsEntries;

}NewsFeedReader

The NewsFeedReader is responsible for reading the News.json from the harddisk an deserializing it. In the ReadNewsStream method a StreamReader is created and passed the path of the news file to read. The read file is then stored as a string. Using the Newsoft.Json Library it deserializes Json file into an NewsFeedObject.

public NewsFeedObject ReadNewsStream(string path)

{

StreamReader reader = new StreamReader(basePath + path + ".json");

string json = reader.ReadToEnd();

reader.Close();

_newsFeedObject = JsonConvert.DeserializeObject<NewsFeedObject>(json);

return _newsFeedObject;

}Things left to do

Of course we also need to hook all these things up to an User Interface. I will no go over this though as you will soon be able to grab the whole project and take a look for your self. If you have been through the UI Chapter, there is nothing new to be done here.

In the package you will also find some more tiny scripts which are responsible for User interaction, which handled the zooming in and out of the globe.